The Media Buyer Is Dead. Here's What the Role Actually Looks Like in 2030.

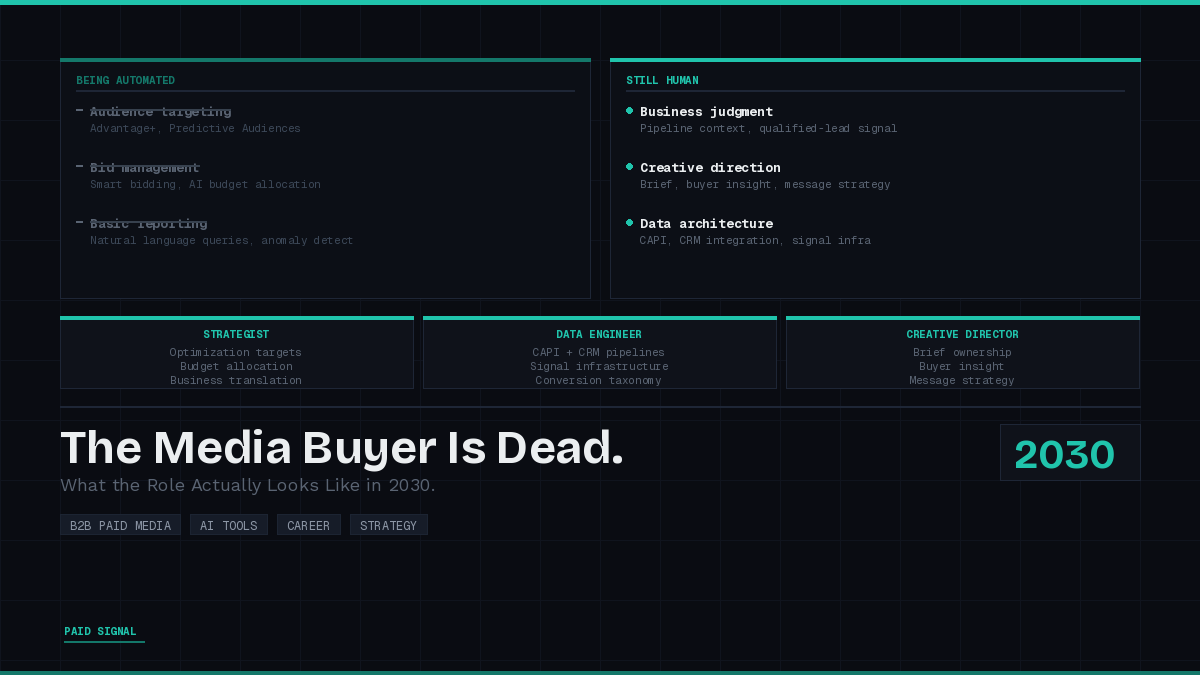

The media buyer isn't dead. But the version of the job most practitioners are currently doing is. Here's the honest picture of what survives and what doesn't.

Every few months, someone posts on X that the media buyer is dead. The post gets traction because it contains a real signal wrapped in a bad take. The signal: the job is changing faster than most practitioners are preparing for. The bad take: it's being eliminated.

But most of the defenses of the role get it wrong in the opposite direction. The usual argument is that certain things cannot be automated: creative insight, understanding the buyer, business judgment. That argument is getting weaker every year, and by 2030 it will mostly be false in its current form.

The more interesting question is what actually creates the moat. And the honest answer requires sitting with a tension that most hot takes on both sides of this debate skip over.

What Is Actually Going Away

Start with what is already leaving.

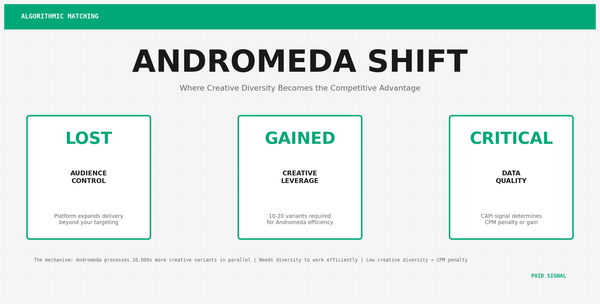

Manual audience targeting is nearly gone. Advantage+, Predictive Audiences, and broad match equivalents on every major platform have made hand-built audience lists a secondary input. The platform's signal pool is larger than anything you can construct manually.

Bid management is going. Smart bidding and AI-based budget allocation handle what used to require a full-time optimization workflow. The practitioner's job shifted from setting the right bid to setting the right optimization target.

Basic reporting is going. Natural language queries are replacing manual report pulls. Anomaly detection is replacing manual performance audits. By 2030, a significant portion of what currently fills a media buyer's week will be handled by tools that did not exist when that practitioner built their skill set.

This is the real part of the argument. The execution layer is going. Acknowledge it. Manus AI inside Meta Ads Manager is the clearest current example of what this could look like long term.

The Counter-Argument Worth Taking Seriously

There is a legitimate pushback to the "just automate it" view, and it deserves a straight answer rather than a dismissal.

The argument: as AI-generated content floods every channel, the practitioners who develop genuine expertise and write from real experience become the differentiator. Generic AI output trained on the internet's average produces average output. Practitioners who build authentic points of view, write from actual experience, and do not outsource their thinking to a model will stand out precisely because everyone else is doing the opposite. Human-written content, grounded in real expertise, becomes more valuable as the alternative gets worse.

This is correct. And it applies directly to paid media creative. Accounts running AI-generated briefs built from generic inputs will look like every other account running AI-generated briefs. The creative will be competent. It will not be distinctive. At scale, competent and undistinctive is expensive.

Where the Two Views Actually Meet

Here is what changes the equation: not all AI inputs are the same.

The objection to AI-synthesized buyer insight assumes the synthesis is happening on generic data. Pull some industry reports, apply a framework, generate a brief. That version of the workflow produces generic output because the inputs are generic.

But consider a different version. You have 200 sales call recordings. You have webinar transcripts where your actual customers described their problems in their own words. You have product marketing research from a buyer study your org ran six months ago. You have churn interviews where customers explained exactly why they left.

These are not generic inputs. They are direct instances of real buyers speaking in their actual language about their actual hesitations. The best version of customer research, which practitioners in demand gen have been advocating for years, was always "talk to your customers." That insight, at scale, fed into a synthesis model, is not AI replacing human judgment. It is human judgment operating on a larger and more accurate evidence base than any individual practitioner could build manually.

The distinction that matters is not AI versus human. It is authentic customer signal versus generic synthetic input. A creative brief built from 200 real customer conversations is not a generic brief. It is likely more grounded than most briefs produced from intuition alone.

The Role That Survives

The practitioner who wins in 2030 holds both of these things at once.

They develop genuine expertise through doing the actual work, studying the outcomes, and building real opinions about what works and why. That expertise is what allows them to evaluate the machine's output critically rather than accepting it because it sounds authoritative. An AI brief generated from customer recordings still needs someone who knows enough to recognize when the synthesis pulled the wrong insight, emphasized the wrong fear, or missed the nuance that a practitioner who has been in this market would catch immediately.

They also understand how to architect the inputs. Which data sources actually reflect the ICP. How to structure sales recordings so the synthesis is pulling qualified-buyer conversations, not junk calls. How to connect CRM stages to optimization targets so the platform is training toward pipeline, not form fills. In large B2B organizations, this means working across sales, product marketing, and customer success to pull the data that already exists and put it to use. Most organizations have more authentic customer signal than they are using. The practitioner who knows how to access and apply it has an advantage that is hard to replicate.

The accountability layer remains unchanged. When the system produces a result and the business outcome does not follow, someone has to understand why, make a different call, and own it. An agent does not attend that meeting. A practitioner does.

What to Build Toward Now

The preparation question is practical. The skills that compound toward the 2030 role:

Real customer fluency. Not persona documents. Actual familiarity with how buyers in your market describe their problems, their hesitations, and what convinces them. This comes from being close to sales conversations, customer interviews, and post-sale research. Practitioners who have this can evaluate AI-synthesized insight critically. Practitioners who do not will accept whatever the model produces.

Data and signal architecture. Most B2B practitioners have surface-level knowledge of CAPI and conversion tracking. Understanding CRM architecture, offline data matching, conversion event taxonomy, and what makes a clean signal versus a noisy one. The automation is only as good as what you feed it. This is where most practitioners are underinvested.

Critical evaluation of AI outputs. This does not have a curriculum yet. It comes from running enough campaigns to develop calibrated skepticism about what the machine says versus what the data actually shows. Build that calibration now, while the stakes are lower.

A genuine point of view. Practitioners who write and think publicly about what they are seeing compound their expertise faster than those who do not. The ones who have been building real opinions, testing them, and publishing them will be the ones whose judgment is trusted when the machine needs oversight.

The job is not dying. It is becoming harder and more consequential. The practitioners who treat the next four years as a window to build the right capabilities will be significantly more valuable in 2030. The ones who either outsource all their thinking to models or refuse to engage with what is changing will both lose, for different reasons.

Paid Signal covers what's actually changing in B2B paid media on LinkedIn and Meta. Practitioner-first, no vendor spin. Twice a week.